Are AI Benchmarks Telling the Truth or Just a Convenient Story?

Why current benchmarks are misleading when judging AI models!

What if the intelligence we’re measuring isn’t really understanding but familiarity in disguise?

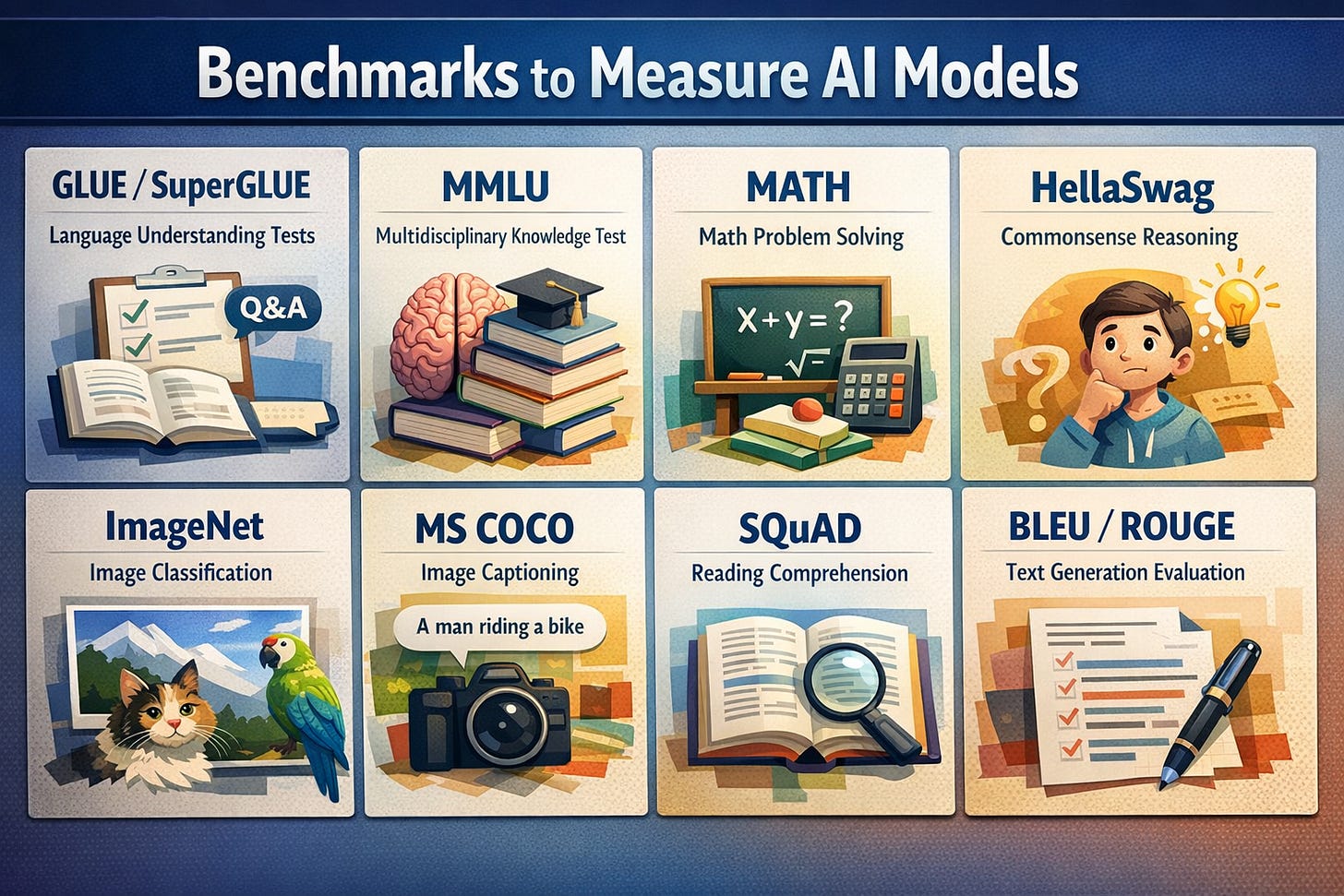

This is the uncomfortable question sitting beneath today’s AI benchmarks.To understand that we must first understand what a benchmark is when scoring AI models:-

A benchmark is just a set of questions or tasks used to check how good an AI model is, just like how we take standardised tests like APs, SAT, GMAT and so on.

On paper, progress looks explosive. Models climb leaderboards, scores inch toward perfection, and every few weeks a new “state-of-the-art” appears. But look closer, and the ground feels less solid. Most benchmarks rely on static, carefully curated datasets, more like frozen snapshots of problems that don’t evolve with the world they’re meant to represent, real life isn’t static! It’s noisy, shifting and unpredictable but then we pause and ask ourselves.

What exactly are we measuring?

Even more concerning is data contamination: When models are trained on vast swathes of internet data, how can we be sure they haven’t already seen parts of the test sets? If they have, then high performance stops being a sign of reasoning and starts looking like recall. The model isn’t solving the problem, it’s recognising it!. That’s déjà vu dressed up as a “reasoning breakthrough”, not intelligence.

And then there’s generalisation(the real prize we claim to chase), a model might dominate language reasoning benchmarks but falter in long term planning. It might excel in math datasets but struggle with real world ambiguity. Yet we compress all of this into a single number, a single rank, as if intelligence were one-dimensional. Is it fair to crown a “best” model when strengths and weaknesses scatter across domains like uneven terrain?

The ecosystem around benchmarks raises its own questions. Different repositories use different tokenizers, scoring rules, and evaluation scripts. Results become difficult to reproduce, let alone compare. Some benchmarks saturate quickly—SUPERGLUE is a classic example where gains reflect memorisation more than genuine capability. Others lack “life,” meaning they don’t continuously introduce fresh, unseen data. Without that, progress risks becoming an illusion more like a loop of optimising for yesterday’s questions.

Then there’s a quieter, more uncomfortable issue: who controls the benchmarks? Private or paywalled evaluations promise reduced contamination, but they also shift power to a small group of curators. Without transparency in how data is selected or filtered, how do we know improvements reflect real capability rather than favourable curation? When access is restricted, scientific progress begins to depend on trust instead of verification.

And what about fairness? Unlike human exams, there are no proctors, no identity checks, no standardised oversight. Teams can fine-tune on test sets, submit repeatedly, or selectively report results. Cultural and linguistic biases seep into datasets, shaping outcomes in ways we barely measure. The playing field tilts ever so slightly, but decisively.

So where does that leave us?

Benchmarks aren’t useless, but they are incomplete, like a trekker using a physical map to navigate through a forest, not detailed and certainly not enough but better than having nothing. The danger is forgetting the difference.

The next “big thing” isn’t just building better models but building better ways to measure them; dynamic, transparent, resistant to gaming, and grounded in real world complexity.

Until then, every leaderboard should come with a silent question:

Are we measuring intelligence or just our ability to package it neatly?

Be sure to share your views, will be happy to hear them. Thanks for reading all this way!!